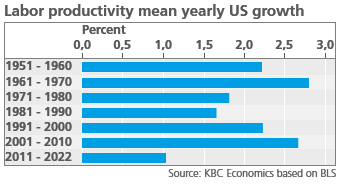

Is the IT revolution failing to lift growth?

“You can see the computer age everywhere but in the productivity statistics”. Economist Robert Solow’s quip from 1987 remains more relevant than ever. The Q2 BLS US Productivity and Costs report again provided some sobering news. Labor productivity declined 1.1% vs last quarter and 2.5% year-on-year. The labor productivity growth we observed since 2011, is exceptionally low (see graph). This low productivity growth is all the more surprising given the advances in IT technology made over the last decades.

The causes of an IT revolution footprint in the productivity statistics are hotly debated among economists. Techno-pessimists such as Harvard professor Robert Gordon1 argue that the high US productivity growth of the 19th and 20th century are unlikely to be repeated in this one. He claims the low-hanging fruit of innovation have already been picked and that new technology will be less impactful. For instance, the invention of self-driving cars will certainly raise US productivity, but its impact will be milder than the invention of the car itself. Furthermore, according to Gordon, the impact of the IT revolution on US productivity ran its course in the 1990’s. He argues we’ll soon see the end of Moore’s law, the observation that the number of transistors on a microchip doubles every two years. Gordon estimates output growth per hour will decline to an average 1.2% in the years 2015 to 2040, down from an average 2.26% observed between 1920 and 2014.

Economist Seda Basihos2 even goes a step a further. According to her, IT innovation is distinctive from other types of innovation as its life cycle is much shorter than that of other products. She claims that the time it takes to adapt to new IT technology is shorter than its life cycle. This constant update of technology thus leads to skill erosion in the labor market. According to her estimates, computers are responsible for 30% of the US productivity decline in the last decades.

Techno-optimists contest these claims. According to them, the current method of calculating GDP underestimates the welfare generated by new technology. Thanks to the IT revolution, many products such as encyclopedias, dictionaries, newspaper articles, music clips and online courses are freely available on the internet. This causes them to be excluded from GDP figures, but it would be hard to argue that they don’t generate any value or welfare. Similarly, a smartphone is accounted as a separate product in GDP figures and not as a sum of a cellphone, an mp3, a GPS, a calculator, a watch, a timer, a game console and so on. Reviewing economic literature, economists Hulten and Nakamura3 calculate that the benefit of innovation to US consumers is underestimated by a trillion or more USD (approximately 4% of current GDP)

Several techno-optimists also contest the claim that IT-related productivity has already reached most of its potential. Broad-based technological innovations typically take decades to reach their full potential. Before electricity was invented, factories were multi-storied buildings that relied on a single steam power engine. When electricity arrived, most firms simply replaced their steam engines by electric motors without adjusting their factory lay-out. Only decades later did entrepreneurs such as Henri Ford harness the true potential of electricity by optimizing their manufacturing processes. Similarly, today, many companies have simply embedded IT solutions in their current processes, rather than overhaul their whole way of working. Those that have managed to redesign their processes, have increasingly outperformed their peers. According to the OECD, frontier firms generated an average 3% higher annual productivity growth in the first decade of this century compared to their non-frontier peers4. If creative destruction runs its course, overall productivity may well be pushed upwards.

Furthermore, many IT-based innovations have yet to reach their full potential. AI, quantum computing, blockchain, robotics, 3D-printing and self-driving car technologies are all still in their infancy, and each one of them has the potential to disrupt major industries. In a 2013 study, Frey and Osborne (2013) concluded that 47% of total employment in the United States is at “high risk” of automation (i.e. in an occupation with at least a 70% chance of automation) in the next decade or two5. In the same trend, a 2017 McKinsey Global Institute study estimates that 51% of US work activities are susceptible to automation6 McKinsey identified potential productivity growth of at least 2 percent per year over the next decade, with about 60 percent coming through digitization7.

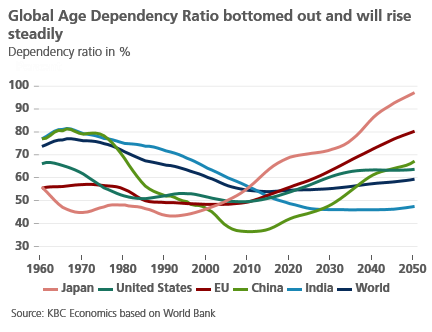

In 1994, Paul Krugman quoted that “Productivity isn't everything, but in the long run, it's almost everything”. He’s absolutely right. As dependency ratios due to our ageing population are rising steadily (see graph), growth of labor productivity will be the key driver of economic growth (see our research report on Demographics). In a low-productivity scenario, social security systems will come under pressure and governments will face harsh budgetary trade-offs, while net real wages will likely remain stagnant. In a high-productivity scenario, rising economic growth will push debt-to-GDP levels downwards and provide sufficient budgetary space to tackle challenges such as climate change. Let’s hope the techno-optimists are right!

1Robert Gordon, 2016, “The Rise and Fall of American Growth: The US Standard of Living since the Civil War” Princeton University

2 Seda Basihos, 2022, “Blue Screen of Death? Obsolescence and Structural Change in the Computer Age”.

3Hulten en Nakamura, 2020, “Expanded GDP for Welfare Measurement in the 21st Century”.

4 OECD-2015-The-future-of-productivity-book.pdf

5 Carl Benedikt Frey† en Michael A. Osborne, 2013, “the future of employment: how susceptible are jobs to computerisation? “

6 MGI-A-future-that-works-Executive-summary.ashx (mckinsey.com)

7 Is de Solow-paradox terug? | McKinsey